Before walking through the steps, you should understand what the data processing in research is. It is a series of steps that translates unorganised and meaningless data into the form which you can analyse in a jiffy. Simply put, the conversion of vector files, email lists, and data lakes into valuable insight is data processing.

Let’s say you intend to extract the email list of marketing managers in the Gold Coast. This data will be scattered in a series of websites, LinkedIn and other social networks. Those multiple sources of emails will appear in various formats. When you capture them from a variety of sources, the collected database will be treated as unorganised data. Since it carries a deep insight, the data analysts will evaluate it to pull out intelligence. This intelligence can be decisions, strategies, valuable information or patterns. The analysts use them to churn breakthroughs for business progress.

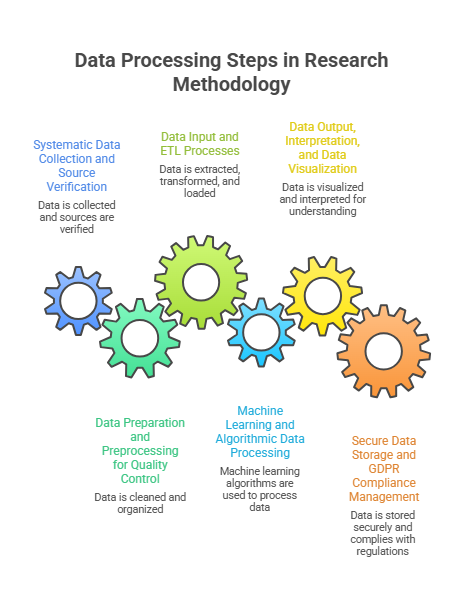

Steps Involved in the Processing of Data in Research methodology:

Step 1: Systematic Data Collection and Source Verification

The collection of data is a process of capturing and measuring intended data in a standardised manner. The data extractors churn data lakes and warehouses. In the meantime, the authenticity of sources is verified. The collected database is tested through hypotheses and validations.

Step 2: Data Preparation and Preprocessing for Quality Control

Also known as preprocessing, data preparation takes care of cleaning and organising data to prep it for the next stage. If the organisation hails from a different niche, it can look up the best one who could outsource data entry services with flawlessness. As it takes on errors, raw data is captured, extracted and then sifted through a verification funnel. This is how the redundancy is eliminated. This process flattens the roadways to high-quality data for deriving business intelligence.

Step 3: Data Input and ETL (Extract, Transform, Load) Processes

Technically, the data processing carries out ETL (Extract, Transform and Load). The aforementioned steps deal with the extraction and the beginning of the makeover of data. Now, the data need to be translated into a comprehensive language. However, the predefined goal sets the stage to interpret their language. This is how the sense is shaped in the form of comprehensive data.

Step 4: Machine Learning and Algorithmic Data Processing

This step is brought about by machine learning algorithms, if done mechanically. The unforeseen patterns are pulled out. Then, they are dedicated to and manipulated according to the scope. Let’s say you want to reshape the patients’ data into an analytical format for diagnosing through apps or software. This step will execute algorithms that could tap into the patterns.

Step 5: Data Output, Interpretation, and Data Visualization

This stage defines the usability of data for the people who do not actually know about dealing with typical data. The analysts visualise it in such a format that a novice could easily make sense of it. In simple words, such a layout is prepared so that the end users can understand it in a wink. Thereby, the strategists from the intelligence domain could pull sense out of visual data through deep analysis.

Step 6: Secure Data Storage and GDPR Compliance Management

Now, it’s the step to save data in a place where you can easily get it from. It can be a data warehouse or a hard disc or a repository. This final stage is carefully taken to completion. While making that data compliant with privacy directives, such as GDPR, the network engineer keeps it protected. While doing so, the networking engineer comes on the front foot to define and assign authenticity and accessibility criteria. Fast turnaround time is always kept in the core while doing so.

Conclusion

The upcoming years will witness the data processing in a jiffy. The stretching of the cloud can remotely handle this task with enough security. Thereby, the turnaround time to accomplish data processing will be short.